Monday, October 31, 2022

Newton modified

Saturday, October 29, 2022

Gold standard

I have a great fondness for a glass of fine red wine or single malt scotch, but I have to admit something up front; to say I have an "undiscerning palate" is a considerable understatement.

I basically have two taste buds: "thumbs up" and "thumbs down." I know what I like, but that's about where it stops. On the other end of the spectrum from me are "supertasters" -- people who have a much greater acuity for the sense of taste than the rest of us slobs -- and they are in high demand working for food and drink manufacturers as taste testers, because they can pick up subtleties in flavor that bypass most people. They're the ones we have to thank for what you read on the labels in wine stores ("This vintage has a subtle nose of asphalt and boiled cabbage; the flavor contains notes of wet dog, garlic, and sour cream, with a delicate hint of chocolate at the finish").

I make fun, but I swear I once saw a sauvignon blanc described as tasting like "cat piss on a gooseberry bush." I had to try it.

It was actually rather nice. "Thumbs up."

What's always struck me about all this is how subjective it seems. So much of it is, both literally and figuratively, a matter of taste. This is why I thought it was fascinating that a new study has found a way to quantify the presence of congeners -- the chemicals other than alcohol introduced by the fermentation and aging processes -- which are the source of most of the flavor in wines, beers, and spirits.

A paper in ACS Applied Nanomaterials, led by Jennifer Gracie of the University of Glasgow, describes a simple test for flavor in whisky using less than a penny's worth of soluble gold ions. It turns out that the aging process for whiskies involves storing them in charred oak barrels, and this introduces congeners that react strongly with gold, producing a striking red or purple color. The deeper the color, the more congeners are present -- and the more flavorful the whisky.

The authors write:

The maturation of spirit in wooden casks is key to the production of whisky, a hugely popular and valuable product, with the transfer and reaction of molecules from the wooden cask with the alcoholic spirit imparting color and flavor. However, time in the cask adds significant cost to the final product, requiring expensive barrels and decades of careful storage. Thus, many producers are concerned with what “age” means in terms of the chemistry and flavor profiles of whisky. We demonstrate here a colorimetric test for spirit “agedness” based on the formation of gold nanoparticles (NPs) by whisky. Gold salts were reduced by barrel-aged spirit and produce colored gold NPs with distinct optical properties... We conclude that age is not just a number, that the chemical fingerprint of key flavor compounds is a useful marker for determining whisky “age”, and that our simple reduction assay could assist in defining the aged character of a whisky and become a useful future tool on the warehouse floor.

Which is pretty cool. Better than relying on people like me, whose approach to drinking a nice glass of scotch is not to analyze it, but to pour a second round. I guess there's nothing wrong with knowing what you like -- even if you can't really put your finger on why you like it.

That's why we non-supertasters rely on studies like this one to provide a gold standard to make up for our own lack of perceptivity.

Friday, October 28, 2022

Odd number

A regular reader of Skeptophilia had an interesting response to a recent post wherein I considered the strong and weak versions of the Anthropic Principle -- the idea that the universe has its physical constants, and thus its overall conditions, fine-tuned to be conducive to the development and sustenance of life. The Strong Anthropic Principle considers that fine-tuning to be the deliberate dialing of the knobs by a creator of some sort; the Weak Anthropic Principle more takes the angle that of course the constants in our universe are set that way, because if they hadn't been, we wouldn't be here to comment upon it. The weak version, which has always made considerable sense to me, looks upon the particular values of the physical constants as either being (1) a happy accident, or (2) constrained by some mechanism we have yet to understand (in other words, there's a scientific reason why they are what they are, and they'd be the same in all possible universes, but we haven't yet figured out what that reason is).

The reader picked up on my citing the fine structure constant as an example of one of those perhaps-arbitrary physical constants, and wrote:

Interesting you should choose the fine structure constant as an example, because that's the one that has even the physicists perplexed as to why it has the value it does. There doesn't seem to be any a priori reason it is equal to 1/137, and it shows up in all sorts of seemingly unrelated realms of physics. Maybe God is trying to tell us something? If so, it very much remains to be seen what he's trying to tell us, but it's a curious number to say the least.

See if you can find what Richard Feynman and Wolfgang Pauli had to say about it, if you want a chuckle, albeit a rueful one.

He's not exaggerating that the fine structure constant comes up all over the place. The usual definition is it is a measure of the strength with which charged particles interact with electromagnetic fields. The formula for it connects four other physical constants -- the charge of an electron, Planck's constant, the speed of light, and the electric permittivity of a vacuum. But this is where things start getting odd; because unlike all the other constants in physics, the fine structure constant is dimensionless -- it has no units. No matter what system of units you're using for the four constituents, everything cancels out and you get a unit-free constant -- 1/137. (Actually, 1/137.035999206, but 1/137 is a decent approximation.) You can throw in the speed of light in furlongs per fortnight, and as long as you are consistent and have all the other distances in furlongs and all the other times in fortnights, it doesn't matter. It always comes out 1/137.

The fine structure constant also shows up in some mystifyingly disparate applications. It's in the formulae used to determine the size of electron orbits in atoms. It is used to explain the odd splitting of spectral lines in the emission spectrum of elements, due to electrons with opposite spins interacting slightly differently with the orbitals they sit in. It shows up in calculations of optical conductivity of solids. It's used to figure out the probability of an atom absorbing or emitting a photon. It relates the speed of motion of an electron as it orbits the nucleus to the speed of light.

It pops up so frequently, in fact, that people involved in the search for extraterrestrial life have suggested that if we want to send a low-information-content, compact message out into space that will communicate to any intelligent species that we have reached the age of technology, all we need to do is send a message that says "1/137."

That's how ubiquitous it is.

It's a lucky thing, too -- to go back to the Anthropic Principle arguments -- that it has the value it does. If it were only a few percent larger, electrons would be so strongly bound by their nuclei that they wouldn't be able to interact with each other to form molecules; a few percent smaller, and they'd be bound so weakly atoms wouldn't form at all, and the whole universe would be a sea of loose elementary particles.

Weirdest of all, the current understanding of the fine structure constant is that it was much higher at the enormous energies immediately after the Big Bang, but then began to drop as the universe expanded and cooled. It decreased to 1/137 -- and then stopped there.

Why did it stop at that value, and not keep sliding all the way down to zero?

No one knows.

As my reader pointed out, even luminaries like Richard Feynman were deeply perplexed by this number. In his book QED: The Strange Theory of Light and Matter, Feynman wrote:

There is a most profound and beautiful question associated with the observed coupling constant, e – the amplitude for a real electron to emit or absorb a real photon. It is a simple number that has been experimentally determined to be close to 0.08542455. (My physicist friends won't recognize this number, because they like to remember it as the inverse of its square: about 137.03597 with an uncertainty of about 2 in the last decimal place. It has been a mystery ever since it was discovered more than fifty years ago, and all good theoretical physicists put this number up on their wall and worry about it.)Wolfgang Pauli was even more direct:

Immediately you would like to know where this number for a coupling comes from: is it related to pi or perhaps to the base of natural logarithms? Nobody knows. It's one of the greatest damn mysteries of physics: a magic number that comes to us with no understanding by humans. You might say the "hand of God" wrote that number, and "we don't know how He pushed His pencil." We know what kind of a dance to do experimentally to measure this number very accurately, but we don't know what kind of dance to do on the computer to make this number come out – without putting it in secretly!

When I die my first question to the Devil will be: What is the meaning of the fine structure constant?

Thursday, October 27, 2022

Cosmic storms

Because we clearly don't have enough to worry about, a new paper in Proceedings of the Royal Society A describes apparent solar storm events captured in tree ring data that, if they happened today, would simultaneously fry every electronic device on Earth.

Those of you who are history buffs may think I'm talking about the 1859 Carrington Event, that caused auroras as near the equator as the Caribbean and triggered sparking, fires, and general failure in the telegraph system. But no: the repeated Miyake Events -- which occurred six times in the last ten thousand years, most recently in 993 C.E. -- are estimated to have been a hundred times more powerful than Carrington, and worse still, scientists have no clear idea what caused them.

The evidence comes from carbon-14 deposition rates. Carbon-14 is a radioactive isotope of carbon that is produced at a relatively steady rate by bombardment of upper-atmosphere carbon dioxide by cosmic rays. That C-14 is then incorporated into plant tissue via photosynthesis. So tree ring C-14 content is a good indicator of the rate of radiation bombardment -- and the team, led by astrophysicist Benjamin Pope of the University of Queensland, have been analyzing six crazily high spikes of C-14 in tree rings, called "Miyake Events" after the scientist who first identified them.

Identifying the events is not the same as discovering their underlying cause, and the Miyake Events have the researchers stumped, at least for now. Solar storms tend to coincide with the eleven-year sunspot cycle, but the Miyake Events show no periodicity lining up with sunspots (or anything else, for that matter). There has even been speculation that they may not be of solar origin at all, but come from some source outside the Solar System -- perhaps a gamma-ray burster or Wolf-Rayet star -- but there are no known candidates that are anywhere near close enough to be responsible, especially given that the phenomenon (whatever it is) has occurred six times in the past ten thousand years.

So the Miyake Events may be real, honest-to-goodness cosmic storms. Not, I hasten to add, the nonsense from the abysmal 1960s series Lost in Space, wherein Will Robinson and Doctor Smith and the Robot would be amusing themselves, then suddenly the Robot would start flailing about and yelling "Danger! Danger! Cosmic storm imminent!" Then some wind would happen and blow over cardboard props and styrofoam rocks, and Will and Doctor Smith would pretend they were being flung about helplessly. In the midst of all this there would be a cosmic noise ("BWOYOYOYOYOYOY") and an alien would appear out of nowhere. These aliens included a space pirate (complete with an electronic parrot on his shoulder), a bunch of alien hillbillies (their spaceship looked like an old shack with a front porch), a motorcycle gang, a group of hippies, some space teenagers, and in one episode I swear I am not making up, Brünhilde, wearing a feathered helmet and astride a cosmic horse (which unfortunately appeared to be made of plastic). She then proceeded to yo-to-ho about the place until eventually Thor showed up, after which things got kind of ridiculous.

But I digress.

Anyhow, back to the real cosmic storms. The weirdest thing about the current research is the discovery that these were not sudden, one-and-done events like Carrington, which only lasted a few hours. "At least two, maybe three of these events... took longer than a year, which is surprising because that's not going to happen if it's a solar flare," Pope said, in an interview with ABC Science. "We thought we were going to have a big slam dunk where we could prove that [Miyake events were caused by] the Sun... This is the most comprehensive study ever made of these events and the big result is a big shrug; we don't know what's going on... There's a kind of extreme astrophysical phenomenon that we don't understand and it actually could be a threat to us."

So that's cheerful.

What's the scariest about an event like this is that even though it wouldn't directly cause any harm to us, it could cause a simultaneous collapse of the entire electrical infrastructure, and that the damage would take weeks or months even to begin to fix. Can you imagine? Not only no internet, but no GPS, no cellphones, maybe even no electrical grid. Air travel would be impossible without the radar and navigational systems that it relies on. For the first time since electricity became widespread, the world would suddenly go dark -- not only figuratively, but literally.

Research into what caused the Miyake Events is ongoing. Even if they can figure out what caused them, though, it's hard to see what, if anything, we could do about it. Chances are they'd occur without warning -- everything toodling along normally, then suddenly, wham.

Probably best not to worry about it.

Have a nice day.

Wednesday, October 26, 2022

Sounding off

Ever have the experience of getting into a car, closing the door, and accidentally shutting the seatbelt in the door?

What's interesting about this is that most of the time, we immediately realize it's happened, reopen the door, and pull the belt out. It's barely even a conscious thought. The sound is wrong, and that registers instantly. We recognize when something "sounds off" about noises we're familiar with -- when latches don't seat properly, when the freezer door hasn't completely closed, even things like the difference between a batter's solid hit and a tip during a baseball game.

Turns out, scientists at New York University have just figured out that there's a brain structure that's devoted to that exact phenomenon.

A research team led by neuroscientist David Schneider trained mice to learn to associate a particular sound with pushing a lever for a treat. After learning the sound, it became as habituated in their brains as our own expectation of what the car door closing is supposed to sound like. If after that the tone was varied even a little, or the timing between the lever push and the sound was changed, a part of the mouse's brain began to fire rapidly.

The activated part of the brain is a cluster of neurons in the auditory cortex, but I think of it as the "What The Hell Just Happened?" module.

"We listen to the sounds our movements produce to determine whether or not we made a mistake," Schneider said. "This is most obvious for a musician or when speaking, but our brains are actually doing this all the time, such as when a golfer listens for the sound of her club making contact with the ball. Our brains are always registering whether a sound matches or deviates from expectations. In our study, we discovered that the brain is able to make precise predictions about when a sound is supposed to happen and what it should sound like... Because these were some of the same neurons that would have been active if the sound had actually been played, it was as if the brain was recalling a memory of the sound that it thought it was going to hear."

Tuesday, October 25, 2022

Butterfly effect

Most of you probably know the basic story you were taught in high school biology; DNA has information-containing chunks called genes, which sit in specific places in packages called chromosomes. Each of those genes is the instruction set for building a specific protein, and the instructions are read by creating an intermediary copy (called mRNA) of the specific gene in question, which then is transported to a structure in the cell called the ribosome, where the sequence is read and used to assemble the protein from smaller bits called amino acids. The protein thus created -- it could be an enzyme, a structural protein, an energy carrier, a pigment, or any of dozens of other types -- goes on to do its specific job in the organism.

This pattern -- gene (DNA) to mRNA to protein -- was thought to be more or less the whole story by the people who unraveled the pattern, James Watson and Francis Crick, so in their typical self-congratulatory fashion they called it the "Central Dogma of Molecular Genetics." (And if you know anything about their history, you'll understand why I'm calling it "typical.") So it was a considerable shock when researchers found out that there was DNA in the genome of every species studied that didn't work this way.

It was a considerably bigger shock when it was found that the amount of DNA that did work this way was around one percent.

You read that right; between ninety-eight and ninety-nine percent of your genome is non-coding DNA. It does not encode the instructions for building proteins. Watson and Crick's "Central Dogma" only applies directly to less than two percent of an organism's DNA. When this was discovered in the 1960s and 1970s, researchers (speaking of arrogance...) called it "junk DNA," following the apparent line of reasoning, "If we don't know what it does right now, it must be useless."

The whole "junk DNA" moniker never made sense to me, especially with the discovery of short tandem repeats, chunks of DNA between two and ten base pairs long that repeat over and over again (the average number of repeats is twenty-five). Short tandem repeats are common in eukaryotic DNA, and their function is unknown. That they do have a function -- and are not, in fact, "junk" -- is strongly supported by the fact that they're evolutionarily conserved. Mutations happen, and if there really was no function at all for STRs, over time the pattern would get lost as mutations altered one base pair after another. The fact that the pattern has been maintained argues that they have an important function of some kind, and mutations knock that function out and are heavily selected against.

We just don't know what it is yet.

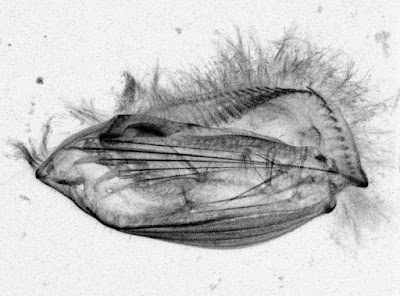

The reason all this comes up is the discovery by a team right here in my neck of the woods, at Cornell University, that at least some of the patterns in butterfly wings are controlled by "genetic switches" in their non-coding DNA. The team, led by Anyi Mazo-Vargas, looked at forty-six regions of non-coding DNA, and found out that a significant number of them, when disabled (a technique called gene knockout), cause huge changes in the butterfly's wing pattern. Take, for example, the brightly-colored Heliconius butterflies:

Knock out a DNA sequence called WntA, and the stripes disappear; disable Optix, and the wings come out jet black.

Other than the obvious deduction -- that these non-coding sequences are acting as switches determining deposition of pigments and arrangements of the cells that generate iridescence -- not much is known about how these sequences work. "We see that there's a very conserved group of switches that are working in different positions and are activated and driving the gene," Mazo-Vargas said.

"We have progressively come to understand that most evolution occurs because of mutations in these non-coding regions," added Robert Reed, who co-authored the paper. "What I hope is that this paper will be a case study that shows how people can use this combination of ATAC-seq and CRISPR [two techniques used in gene modification and knockout] to begin to interrogate these interesting regions in their own study systems, whether they work on birds or flies or worms."

So once again, as we look more closely at things, we find intricacies we never dreamed of. What I love about research like this is that a seemingly small discovery -- that small stretches of non-coding DNA control macroscopic traits like coloration -- could have an impact on our understanding of how genetics works in general.

Truly -- a "butterfly effect."

Monday, October 24, 2022

The palimpsest

Sophocles was a classical Greek playwright, whose beautiful and haunting tragedies Oedipus Rex, Antigone, and Oedipus at Colonus are standard fare in college literature classes. Those three plays are his most famous, but there are four others still read and performed -- Ajax, The Women of Trachis, Electra, and Philoctetes.

Those seven plays are all that is left of the 120 plays he wrote.

Nineteen of Euripides's plays survive, out of nearly a hundred. Seven of Aeschylus's plays still exist, from an estimated eighty. Nothing is left of the work of the great scientists Anaxagoras, Eratosthenes, Aristarchus, and Thales except for fragments.

What we have from the classical Greek and Roman world is just what happened to survive, sometimes by design and sometimes by pure accident. Fires claimed a great many ancient manuscripts, some of which were set deliberately. The Great Library of Alexandria, which housed huge numbers of these works, endured loss after loss. A fire set by the troops of Julius Caesar in 48 B.C.E. that was intended to destroy Egyptian ships at the port spread to the rest of the city and burned an estimated forty thousand scrolls in the Library. Repeated sieges of the city under the Roman Emperors Aurelian and Diocletian three hundred years later destroyed a good percentage of what was left. The rest of the surviving manuscripts met their end under two onslaughts from organized religion. The first came in the early fifth century C.E. from the Christian Bishop Cyril, who not only worked to destroy the remnants of the Library, but had one of its leading scholars -- Hypatia -- brutally murdered by a mob. (Cyril, on the other hand, went on to be canonized.) The second came after the Muslim takeover of Egypt in the seventh century C.E., when the Caliph Omar ordered all of the remaining scrolls collected and burned for fuel. Omar famously remarked, "If those books are in agreement with the Quran, we have no need of them; and if they are opposed to the Quran, destroy them."

What we know of pre-Dark-Ages European science and literature is so sparse that "fragmentary" seems a significant understatement. It's as if we were trying to understand the works of William Shakespeare while having in hand Timon of Athens, Cymbeline, the last twenty pages of Coriolanus, and five randomly chosen sonnets.

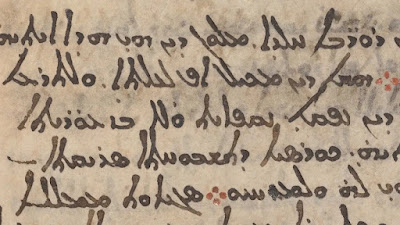

There still remains some shred of hope for recovering some of this lost wealth of knowledge, however. Researchers believe they have found pieces of the second century B.C.E. astronomer Hipparchus's lost manuscript Star Catalogue on a palimpsest -- a piece of parchment that had been erased for reuse. Because parchment was expensive (it was made from specially tanned lamb or calf skin), used parchment was often scraped to remove the previous writing and then repurposed. Using multispectral imaging, non-destructive technique for picking up faint traces of ink on manuscripts, a team from the Université Sorbonne and Cambridge University have uncovered what seems to be the beginning of Hipparchus's lost work in the background of the Codex Climaci Rescriptus, a Syriac Greek document from the eleventh century C.E.

You have to wonder what other gems from the ancient world lie hidden on palimpsests.

How amazing would that be to reclaim some of these windows into the past from manuscripts currently sitting in libraries and museums? What would we learn about our forebears' history, science, and philosophy? Until we can invent a time machine and go back to visit the Library of Alexandria -- the pipe-dream of many a scholar -- the best we can do is apply our modern technology to what we have.

And to judge by the recent discoveries, there may be more to find than we ever realized.

Saturday, October 22, 2022

The law of whatever works

One common misconception about the evolutionary model and natural selection is that it always leads to greater fitness.

It's fostered by the way the subject is taught; that evolution always works by "survival of the fittest," so over time organisms always get bigger, stronger, faster, and smarter. There's a kernel of truth there, of course; natural selection does favor the organisms that survive longer and have more offspring. But there are a number of complicating factors that make this oversimplified impression far wide of the truth.

First, evolution -- in the sense of changes in gene frequencies in populations -- can happen for other reasons besides fitness-based natural selection. One common example is sexual selection, when a characteristic having little to nothing to do with fitness is the basis for mate choice, such as bright color in the males of many bird species. This has led to sexual dimorphism -- males and females have dramatically different external appearance, as the females choose ornately-adorned mates who then pass those genes on to their offspring.

Second, the selecting factors can change when the environment does. What is an advantage today can be a disadvantage tomorrow. This can lead to what's called an evolutionary misfire -- when some characteristic that was beneficial turns deadly as the conditions change. A particularly interesting example of this is the way moths navigate. Moths, like most insects, have compound eyes, hemispherical structures with multiple radially-oriented facets. They find their way around at night by using distant light sources (such as stars and the Moon), keeping the light from those objects shining into one facet of the eye. If the light source is distant, this allows the moth to fly in a straight line. The problem is, moths evolved in a context where there were no nearby light sources at night; and if you try this orientation trick on a close-up light source, you end up flying in circles.

And this is why moths spiral around, and eventually get fried by, candles, lanterns, and streetlights.

Third, many -- probably most -- genes have multiple effects, a phenomenon called pleiotropy. A single gene that can provide a benefit in one way can provide a serious disadvantage in another. This is, in fact, why this topic comes up today; scientists at the University of Chicago showed that a gene -- ERAP2 -- that gave people in the fourteenth century a better shot at surviving the bubonic plague also predisposes them (and their descendants) to Crohn's disease.

The link, of course, is the immune system; Crohn's is an autoimmune disease, one where the body's immune system starts fighting against its own tissue, in this case the lining of the digestive tract. The improvement in survival during the Black Death was significant -- an estimated forty percent increase in the chances of fighting off the disease -- so during the pandemic, the increase in risk for Crohn's was an acceptable tradeoff for near certain death from the plague.

Evolution is, truly, "the law of whatever works at the time." It's not forward-looking; the forces of selection act on whatever genes the population has currently got, and the set of genes which is able to make more copies of itself is the one that gets passed on to the next generation. This can happen because of reasons of fitness, but for many other causes including pure random chance (what is called genetic drift).

And because of this, the genetic hand we were dealt can sometimes have untoward consequences -- in a world where the bubonic plague has in most places been eradicated, a gene that had a beneficial effect now turns out to be a significant disadvantage.

Friday, October 21, 2022

Microborgs

Thursday, October 20, 2022

Mosquito magnets

Wednesday, October 19, 2022

The walk of life

There are countless different actions we take every day that we do so automatically we're hardly even aware of how complex they are.

Take, for example, walking. Walking takes the coordination of dozens of muscles, each of which has to contract and relax in exactly the right sequence to propel us forward and adjust for irregularities of the terrain we're navigating. To avoid falling, we need to keep our center of gravity over our base of support, which is aided by adjustments in posture and such counterbalancing movements as swinging the arms. But in order to do that we have to keep track of proprioception -- our sense of where our bodies are -- which is accomplished by a whole array of sense organs, including vision, the tactile sensors in the skin, and the semicircular canals -- the balance organs in the inner ear, which work a little like a carpenter's level.

And all of those -- the sense organs that keep track of what's going on and the muscles that use that information to contract and relax at the right times -- are linked by an astonishingly complex set of nerve relays and circuits coordinated by our brains.

All of that, just to get up and walk across the room.

The reason the topic of locomotion comes up is a paper a couple of weeks ago in Current Biology describing a single-celled protist called Euplotes eurystomus that has fourteen leg-like appendages -- and is able to walk.

The scientists studying Euplotes found that the appendages had thirty-two different configurations, which they called"gait states," and that somehow, the little creature was keeping track of which sequence of gait states allowed for the most efficient walking. It turned out that the internal scaffolding of the cell, made of hollow threads called microtubules, created cross-links from each appendage to the others. The amount of tension in the microtubules allows the organism to coordinate the movement of all fourteen appendages.

"The fact that Euplotes' appendages are moving from one state to another in a non-random way means this system is like a rudimentary computer," said Wallace Marshall, of the University of California - San Francisco, who co-authored the paper.

.png)