One of the shakiest concepts in biological anthropology is race.

Pretty much all biologists agree that race, as usually defined, has very little genetic basis. Note that I'm not saying race doesn't exist; just that it's primarily a cultural, not a biological, phenomenon. Given the fact that race has been used as the basis for systematic oppression for millennia, it would be somewhere beyond disingenuous to claim that it isn't real.

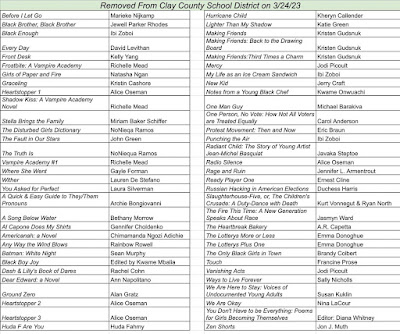

The problem is, determination of race has usually been based upon a handful of physical characteristics, most often skin, eye, and hair pigmentation and the presence or absence of an epicanthal fold across the inner corner of the eye. These traits are not only superficial and not necessarily indicative of an underlying relationship, the pigment-related ones are highly subject to natural selection. Back in the nineteenth and early twentieth century, however, this highly oversimplified and drastically inaccurate criterion was used to develop maps like this one:

This subdivides all humanity into three groups -- "Caucasoid" (shown in various shades of blue), "Negroid" (shown in brown), and "Mongoloid" (shown in yellow and orange). (The people of India and Sri Lanka, shown in green, are said to be "of uncertain affinities.") If you're jumping up and down saying, "Wait, but... but..." -- well, you should be. The lumping together of people like Indigenous Australians and all sub-Saharan Africans (based mainly on skin color) is only the most glaring error. (Another is that any classification putting the Finns, Polynesians, Koreans, and Mayans into a single group has something seriously amiss.)

The worst part of all of this is that this sort of map was used to justify colonialism. If you believed that there really was a qualitative difference (for that, read genetic) between the "three great races," it was only one step away from deciding which one was the best and shrugging your shoulders at the subjugation by that one of the other two.

The truth is way more complicated, and way more interesting. By far the highest amount of genetic diversity in the world is in sub-Saharan Africa; a 2009 study by Jeffrey Long found more genetic differences between individuals from two different ethnic groups in central Africa than between a typical White American and a typical person from Japan. To quote a paper by Long, Keith Hunley, and Graciela Cabana that appeared in The American Journal of Physical Anthropology in 2015: "Western-based racial classifications have no taxonomic significance."

The reason all this comes up -- besides, of course, the continuing relevance of this discussion to the aforementioned systematic oppression based on race that is still happening in many parts of the world, including the United States -- is a paper that appeared last week in Nature looking at the genetics of the Swahili people of east Africa, a large ethnic group extending from southern Somalia down to northern Mozambique. While usually thought to be a quintessentially sub-Saharan African population, the Swahili were found to have only around half of their genetic ancestry from known African roots; the other half came from southwestern Asia, primarily Persia, India, and Arabia.

The authors write:

[We analyzed] ancient DNA data for 80 individuals from 6 medieval and early modern (AD 1250–1800) coastal towns and an inland town after AD 1650. More than half of the DNA of many of the individuals from coastal towns originates from primarily female ancestors from Africa, with a large proportion—and occasionally more than half—of the DNA coming from Asian ancestors. The Asian ancestry includes components associated with Persia and India, with 80–90% of the Asian DNA originating from Persian men. Peoples of African and Asian origins began to mix by about AD 1000, coinciding with the large-scale adoption of Islam. Before about AD 1500, the Southwest Asian ancestry was mainly Persian-related, consistent with the narrative of the Kilwa Chronicle, the oldest history told by people of the Swahili coast. After this time, the sources of DNA became increasingly Arabian, consistent with evidence of growing interactions with southern Arabia. Subsequent interactions with Asian and African people further changed the ancestry of present-day people of the Swahili coast in relation to the medieval individuals whose DNA we sequenced.Note that on the Meyers Konversations-Lexikon map, the Arabians and Persians are considered "Caucasoid," the Indians are "uncertain," while the Swahili are definitely "Negroid."

****************************************